Adapting to online learning during COVID-19

Grok Learning is an online platform for university-level teaching of programming-related subjects. It’s also used in many Australian schools to teach the new Digital Technologies curriculum. At Grok we work closely with our university partners to support their innovative teaching methods within our platform, and collaborate on creating content that makes the most of our technology. In this article we look back at how we’ve collaborated with Melbourne University in response to the recent COVID-19 crisis.

It seems like a long time ago already, but COVID-19 only became a global issue a few months ago as universities were just starting their first semester of the year. Initially the main issue for Australian universities was that international students were unable to fly in to start their studies on campus, raising questions on how to provide a remote learning experience from overseas. Gradually this shifted to the campuses being closed to all students, and the growing need for assessments to be conducted online. Now, even with daily Australian COVID-19 cases down to single digits, there is still caution and uncertainty over on-campus teaching in Semester 2.

Teaching during COVID

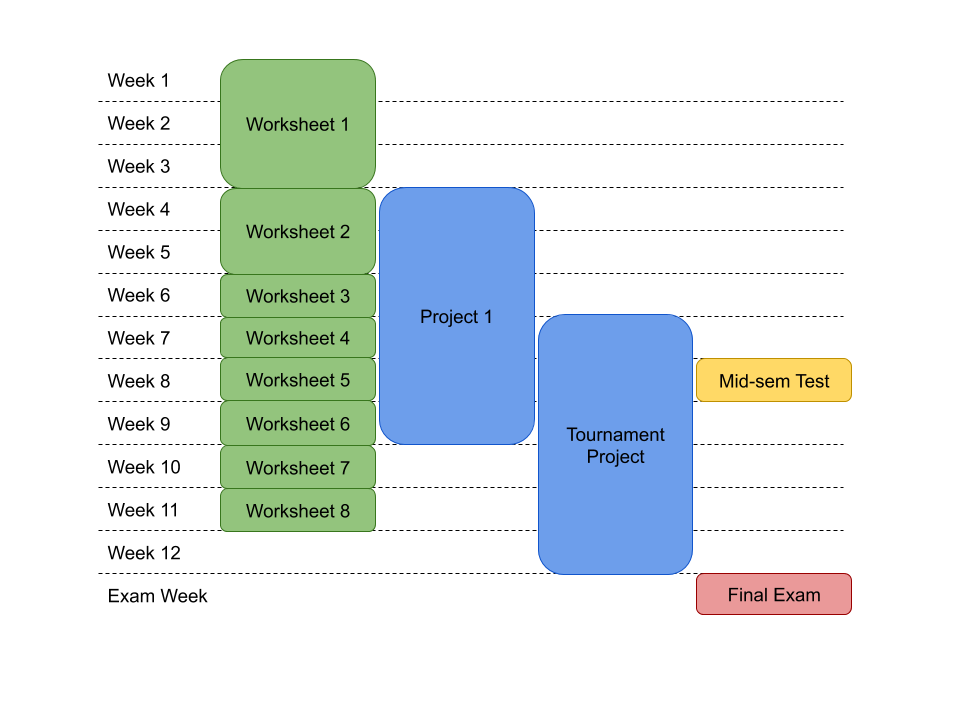

One of our major customers is Professor Tim Baldwin of the University of Melbourne, whose COMP10001 Foundations of Programming subject has enrolments of over a thousand students in Semester 1. The subject makes heavy use of Grok: there are weekly online programming exercise worksheets; a major project assignment completed online; and a second project in which students write code to play a card game, for which all students’ submissions are pitted against each other in a tournament hosted on the Grok platform. The tutoring team used Grok’s tutor messaging system to provide 1-on-1 help to students struggling on programming problems. The subject was already well set up to provide a strong online education experience to students, despite being structured around the standard on-campus teaching components of lectures, face-to-face tutorial classes, plus a paper-based mid-semester test and final exam.

Outline of COMP10001 activity schedule, delivered online via Grok for Semester 1 2020. Worksheets are auto-graded, while projects, mid-semester test and final exam use Grok’s online rubric marking.

Responding with rapid innovation

Every year we use feedback from our users to select new developments to improve our platform. We additionally provide custom development for users willing to contribute funds to specific work. At the start of 2020 we were already part-way through implementing a rubric-based manual marking system to assist tutors in marking the large number of project submissions for COMP10001. But with COVID-19, Tim asked us whether we could change tack to help with a more urgent issue: how to support online exams.

Grok courses typically focus around formative assessment, in which students make use of automated feedback to incrementally improve their attempts to solve a problem. Exams present a variety of different requirements:

- Time limited;

- Strong security safeguards to prevent cheating; and

- Wide range of question styles, beyond writing code and multiple choice.

There was also the issue of marking exams, which would be performed by tutors rather than through automated tests. Fortuitously this last aspect was already covered by the manual marking work we already had in development.

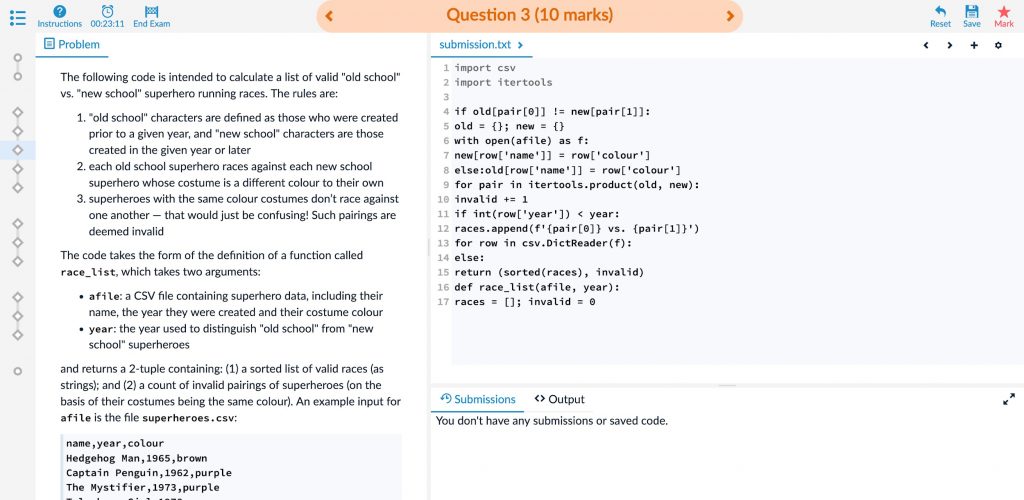

Our first step was to adapt the paper exams to a format we could deliver from Grok. Previous exams used a mix of question types, including Parsons-style (rearranging scrambled lines of a function), fill-in-the-blank, plus short-answer and long-answer questions. Rather than build special-purpose problem types for each, we concentrated on making a general-purpose problem type that could be used for all situations.

In parallel to this work, we also developed a new exam session mode, suitable for delivering exam questions to students under timed conditions, along with some security restrictions under the hood.

Example of a Parsons (scrambled code) problem delivered in an exam session. The countdown timer and button to end exam are visible in the top-left toolbar.

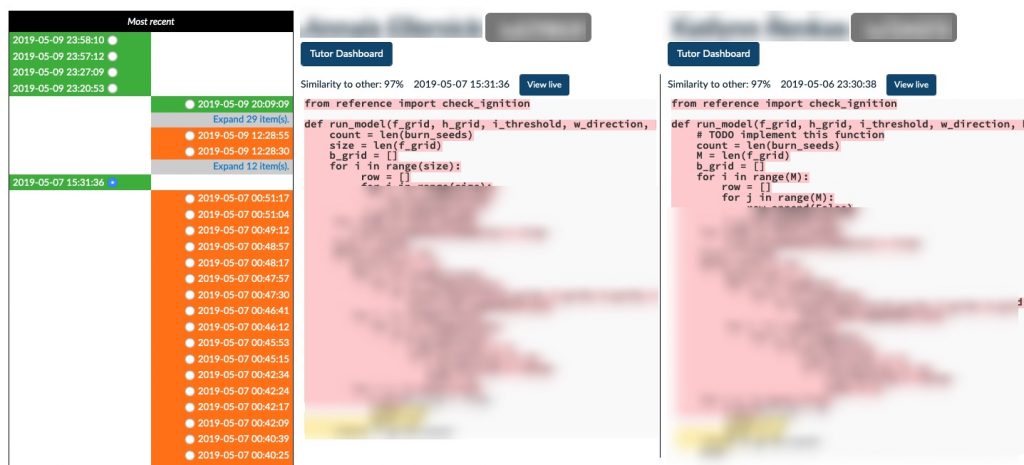

Additionally, we have extended our plagiarism detection tool’s functionality to work with students’ responses to the exam questions, as a deterrent to academic dishonesty. During exam sessions the students’ work is regularly saved so that the plagiarism detection can spot code-reuse that students may try to hide prior to making a final submission. This can support other tools at the examiners’ disposal, such as remote invigilation, that can be provided externally to Grok.

Example of plagiarism detection within Grok, with identifying details obscured. Two students’ early attempts are virtually identical, with differences subsequently added to mask similarities.

Conclusions

Although final exams are still to come, the experience so far from our developments has been very positive. The COMP10001 mid-semester test ran successfully, using Grok-based versions of all the questions usually delivered on paper. Moreover, there have been some benefits observed to running the test online. For instance, students’ responses are not obfuscated by poor handwriting. Tutors can also be guided by the outcomes of automated tests students’ submission. We’re also experimenting with providing some simple feedback to students to prevent them from making silly mistakes. More importantly, students have received marks and feedback via the rubric scorecards within Grok, improving the quality of feedback received by students.

So what have we gained from this experience? The collaboration between Grok and the University of Melbourne has resulted in improvements that neither party could have achieved alone. We have provided platform improvements and support to allow exams to be provided online to over a thousand students isolated at home within Australia or stranded overseas. We’ve also gained essential feedback and testing of these new developments that have massively improved the final result.

So where next? While the work was chiefly responding to a crisis this semester, it is possible that many universities will have a need to run exams to students off-campus during Semester 2, and possibly beyond. Furthermore, running such exams online rather than on paper may continue to be advantageous even after the COVID-19 crisis has passed. For instance, mid-semester quizzes might be well suited to be performed by students under timed conditions at home, and concerns about cheating are less critical. And even for invigilated on-site exams, conducting exams online brings the benefits of easier marking.

Whatever happens, we’ve enjoyed our collaboration with such an engaged and enthusiastic teaching team at the University of Melbourne, and the opportunity to develop something to improve the learning experience for students.

To find out more about how Grok Learning work with you to improve your online learning offerings visit: groklearning.com/universities or contact: uni-support@groklearning.com

Email rcox@intermedia.com.au